Welcome back to The AGI Observer and welcome to the final piece of this mini-series. The goal of this publication has been simple: give a clear overview of the state of the art and the most likely paths to AGI, in plain language, with enough structure that readers can track progress without getting lost in hype.

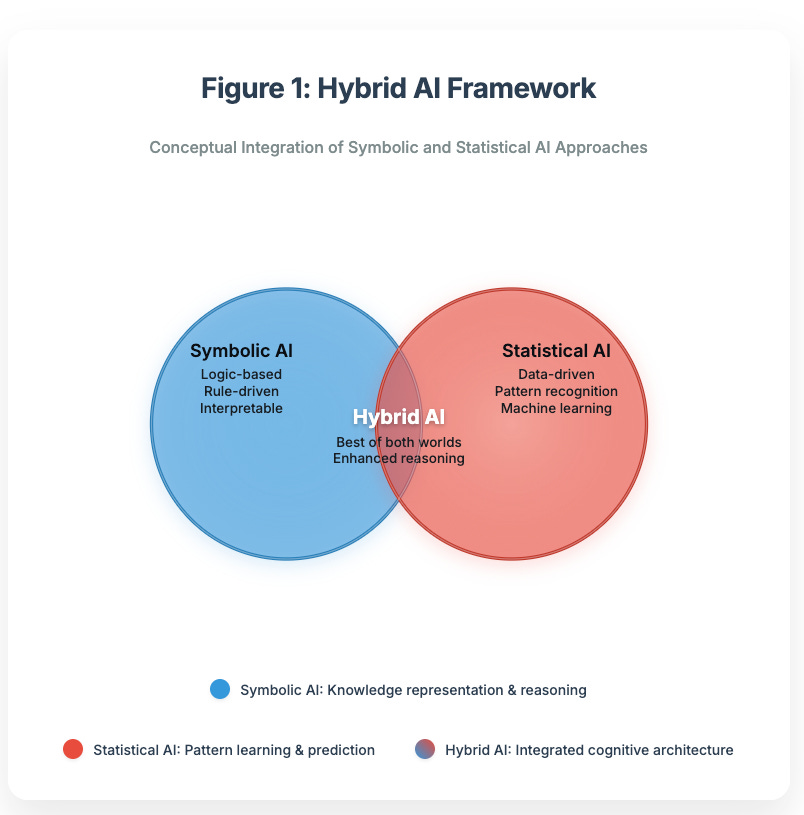

In the first four parts we mapped the major “engines” of progress: Scaling Deep Learning, Neuro-Symbolic Systems, Cognitive Architectures, and Evolution + Reinforcement Learning. In Part 5, we cover the pathway that, in practice, is already becoming the industry default: Integrated Hybrids: the idea that the first real AGI won’t be a single monolith, but a convergent system where multiple approaches reinforce each other.

As before, we end with a sample educational virtual portfolio to map this pathway to real companies and infrastructure. Educational only; not financial advice.

Executive summary

Thesis. The most realistic route to AGI is convergence: foundation models for perception and language, retrieval for grounded knowledge, planning and tool-use for execution, verifiers for reliability, and RL-style feedback loops for adaptation, all orchestrated as one system.

Why it matters. Hybrids are how you get from “impressive answers” to consistent outcomes: less hallucination, more auditability, better long-horizon performance, and safer control surfaces.

Near term. Expect an explosion of “agentic stacks” in enterprise and consumer: tool-using copilots, workflow agents with memory, code-writing systems evaluated on real tasks, and embodied agents learning in simulators.

Risk. The failure mode shifts from “model made a mistake” to “system interaction broke”: orchestration bugs, security issues (prompt injection/tool misuse), hidden dependencies, and unclear accountability.

What to watch. End-to-end benchmarks (coding, web interaction, long-horizon tasks), stronger evaluators, agentic retrieval, persistent memory with provenance, and hybrid systems that can self-correct under pressure.

1) What “Integrated Hybrids” means in practice

A hybrid system isn’t “a bigger model.” It’s a stack: and the stack matters because each layer compensates for another layer’s weakness.

A typical integrated hybrid looks like this:

Generalist core model (language + multimodal perception)

Retrieval / external memory (documents, databases, vector search)

Planner (breaks goals into steps, chooses tools, schedules actions)

Tool layer (search, code execution, spreadsheets, APIs, enterprise systems)

Verification layer (tests, constraints, rule checks, citations/provenance)

Feedback / learning loop (human preference, AI feedback, RL-style optimization, and/or continual skill libraries)

Guardrails & governance (policy filters, logging, permissions, monitoring)

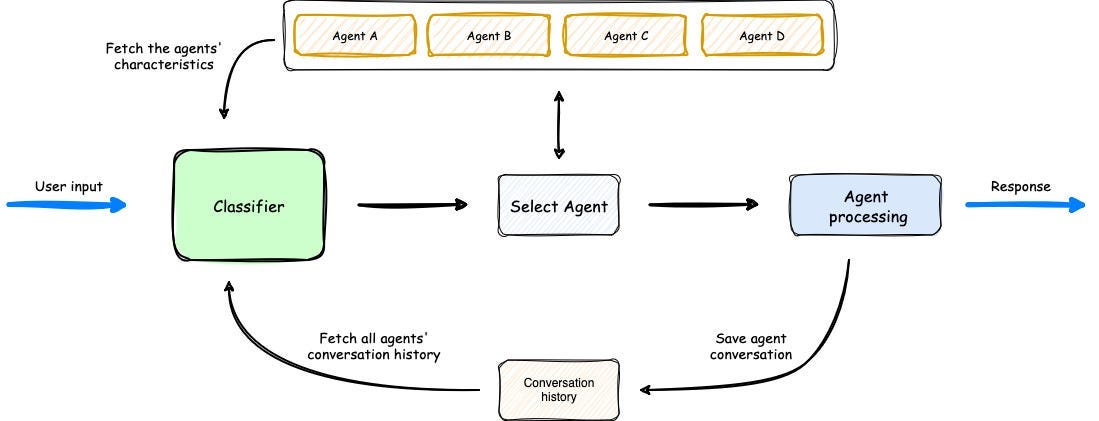

This is why “agents” are not one thing: they’re systems engineering around a capable model.

2) Why this pathway matters

Reliability beats raw cleverness

Scaling improves capability, but most real-world failures are not “IQ problems.” They are workflow problems: missing context, wrong assumptions, no grounding, no checks, no stable memory.

Hybrids fix that by design: retrieval grounds knowledge, tools turn intent into action, and verifiers reduce silent failure. Retrieval-augmented generation (RAG) is a canonical example of this “parametric + non-parametric memory” approach.

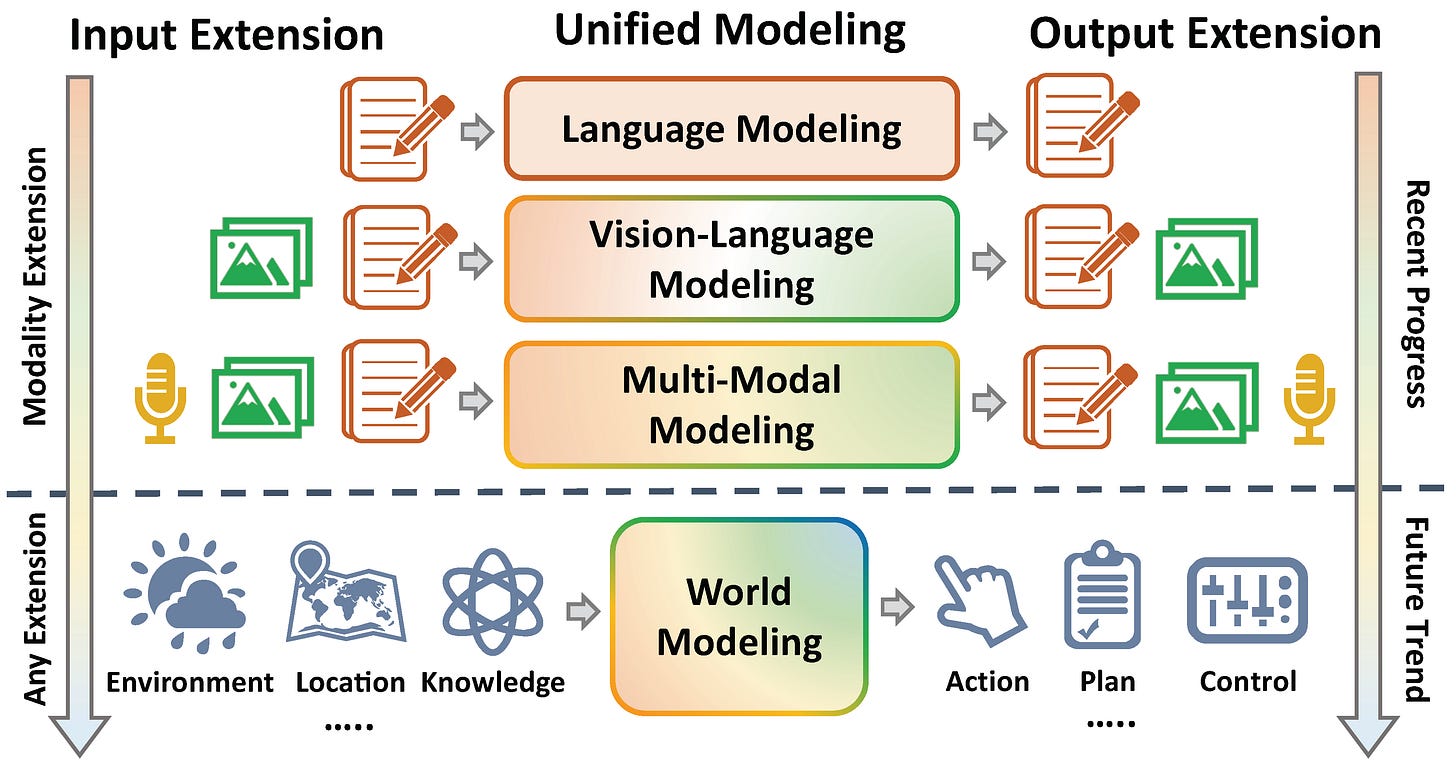

Generality is multi-modal and action-based

AGI that only speaks is limited. We’re seeing serious effort toward agents that can perceive and act across environments, from robotics to simulated 3D worlds, using multimodal models as the glue.

Safety becomes an architecture problem

When you build systems out of modules, you can add explicit choke points: permissions, auditing, policy constraints, and “stop and ask” behaviors. Constitutional-style approaches also show how feedback-driven training can shape behavior toward safer outputs.

3) How it works under the hood

Here are the most common “hybrid primitives” showing up across frontier systems:

Reason + Act loops

Instead of producing a single answer, the model alternates between reasoning and actions (searching, reading, calculating, querying tools), which measurably reduces hallucination and improves task completion in interactive settings.

Self-taught tool use

Toolformer-style training shows a model can learn when to call APIs and how to integrate results back into generation, essentially turning tools into an extension of the model’s cognition.

Multi-agent orchestration

Frameworks like AutoGen formalize the idea that complex work is better handled by specialized roles (planner, coder, reviewer, researcher) that coordinate via structured conversation and tool calls.

Skill libraries and continual improvement

Some agent designs store reusable, composable skills (often as code) and keep expanding them over time, compounding capabilities beyond a single session. Voyager is a clean example of this pattern.

Embodiment and grounded generalists

Work like PaLM-E and Gato shows the push toward a single policy that spans modalities and tasks, bridging language, vision, and action spaces (a key ingredient if “generality” is meant literally).

4) Where we are today

The strongest signal that hybrids are “the default” is that progress is increasingly measured on end-to-end tasks, not just model benchmarks.

Coding as a real-world environment: SWE-bench evaluates models/agents on real GitHub issues, and SWE-bench Verified adds human validation for reliability, exactly the kind of evaluator layer hybrid systems need.

Interactive web environments: WebShop provides grounded, multi-step web interaction tasks (browse, compare, purchase-like decisions).

Embodied benchmarks: ALFWorld connects text-based planning to embodied actions, a practical bridge for sim-to-real thinking.

Agentic product direction: Gemini-era agent work (e.g., SIMA-style agents in 3D environments) reflects the broader industry direction toward perception + reasoning + action systems.

The pattern is consistent: as soon as you measure outcomes, hybrids outperform “just a model,” because they can search, verify, execute, and recover.

5) Bottlenecks and failure modes

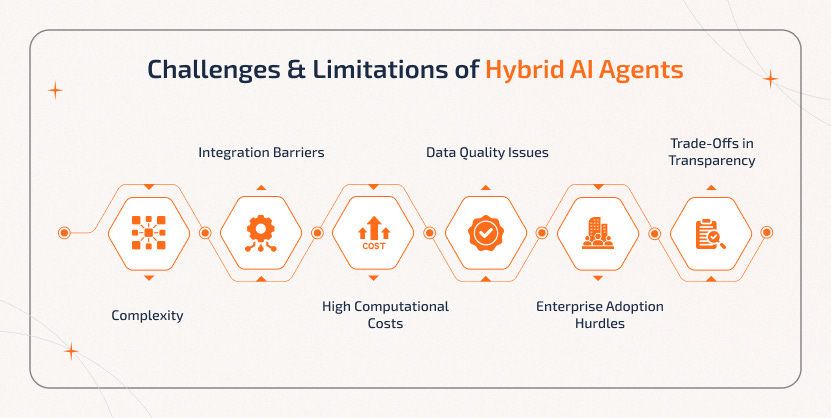

Integrated hybrids solve many problems, but they introduce new ones.

Orchestration complexity

More modules means more interfaces. Failures often come from mismatched assumptions between planner, tools, memory, and verifier.Security and prompt injection

Retrieval and web browsing introduce adversarial inputs. Tool access raises the stakes (data leakage, unintended actions).Latency and cost

Multi-step loops and multiple agents can be slow and expensive unless the stack is optimized.Evaluation becomes the moat

The biggest bottleneck is often knowing whether it worked. Good evaluators are scarce, and optimization pressure tends to exploit evaluator weaknesses.Accountability and governance

When a composite system acts, who is responsible: the model, the tool provider, the workflow designer, the deployer?

6) What to watch next

If you want early signals that integrated hybrids are moving from “useful” to “general,” watch for:

Reliable, repeatable performance on real tasks (not one-off demos), especially on verified coding and long-horizon benchmarks.

Agentic retrieval where the system decides what to fetch, how to drill down, when to stop, and can cite provenance (early research is already pushing here).

Persistent memory with audit trails (what it stored, why it stored it, where it came from)

Verifiable reasoning: unit tests, formal constraints, checkers, and “proof-like” traces where possible

Embodied generalization across environments, not just within one simulator.

7) Sample educational virtual portfolio: Integrated Hybrid stack

Education only; not financial advice. Equal weight within buckets.

Agent platforms & enterprise workflows (25%)

Microsoft (MSFT), Alphabet/Google (GOOGL), Amazon (AMZN), ServiceNow (NOW)

Data + retrieval + memory backbone (20%)

Snowflake (SNOW), MongoDB (MDB), Elastic (ESTC), Oracle (ORCL)

Observability, evaluation, and operations (15%)

Datadog (DDOG), Atlassian (TEAM), Cloudflare (NET)

Compute & semiconductor supply chain (25%)

NVIDIA (NVDA), AMD (AMD), TSMC (TSM), ASML (ASML)

Security & identity for agentic systems (10%)

CrowdStrike (CRWD), Palo Alto Networks (PANW)

Simulation, industrial, and embodied execution (5%)

Ansys (ANSS), ABB (ABB)

Why this stack: integrated hybrids pull simultaneously on compute, cloud/tooling, retrieval/data systems, monitoring/evaluation, and security, and, increasingly, simulation + real-world execution.

Closing thought

This mini-series started with a question that matters more than any single model release: what are the plausible routes to general intelligence, and how do we track them responsibly?

If Part 1 was about raw capability, Part 2 about consistency, Part 3 about organized cognition, and Part 4 about adaptation pressure, then Part 5 is the synthesis:

AGI may arrive not as one breakthrough, but as a convergent system where multiple imperfect methods finally lock together into something robust.

Prepared by AI Monaco

AI Monaco is a leading-edge research firm that specializes in utilizing AI-powered analytics and data-driven insights to provide clients with exceptional market intelligence. We focus on offering deep dives into key sectors like AI technologies, data analytics and innovative tech industries.